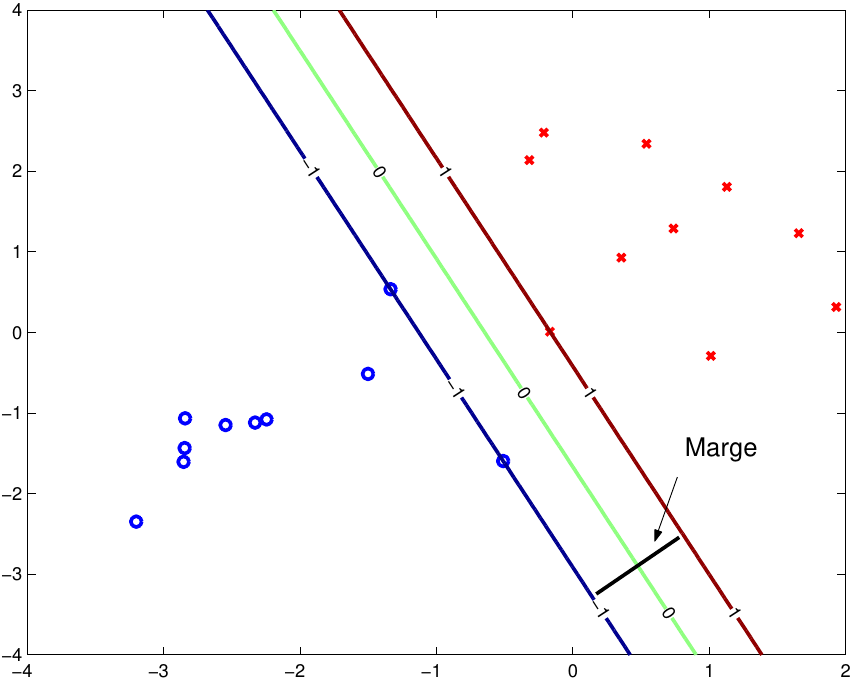

now if you try to plot it in 1D you will end up with the whole line "filled" with your hyperplane, because no matter where you place a line in 3D, projection of the 2D plane on this line will fill it up! The only other possibility is that the line is perpendicular and then projection is a single point the same applies here - if you try to project 49 dimensional hyperplane onto 3D you will end up with the whole screen "black"). Exactly no pixel would be left "outside" (think about it in this terms - if you have 3D space and hyperplane inside, this will be 2D plane. However this only shows points projetions and their classification - you cannot see the hyperplane, because it is highly dimensional object (in your case 49 dimensional) and in such projection this hyperplane would be. Pred <- predict(svm, )įor more examples you can refer to project website import numpy as np import matplotlib.pyplot as plt def intersect(rect, line): l xmin,xmax,ymin,ymax rect a,b,c line assert a0 or. import matplotlib.pyplot as plt from sklearn import svm from sklearn.datasets import makeblobs from sklearn.inspection import DecisionBoundaryDisplay we create 40 separable points X, y makeblobs. ,, core="libsvm", kernel="linear", C=10) Plot the maximum margin separating hyperplane within a two-class separable dataset using a Support Vector Machine classifier with linear kernel. # We can pass either formula or explicitly X and Y # We will perform basic classification on breast cancer dataset Ici b est utilis pour slectionner l’hyperplan c’est-dire perpendiculaire au vecteur. Ceux-ci sont communment appels vecteurs de poids dans l’apprentissage automatique. Simply train svm and plot it forcing "pca" visualization, like here. Un hyperplan sparateur peut tre dfini par deux termes : un terme d’interception appel b et un vecteur normal d’hyperplan de dcision appel w. If you are not familiar with underlying linear algebra you can simply use gmum.r package which does this for you. LeschantillonsLeschantillons entours correspondent aux vecteurs supports Source publication Extraction and. You can obviously take a look at some slices (select 3 features, or main components of PCA projections). 2-Hyperplan sparateur optimal qui maximise la marge dans l'espace de redescription. For example, if you enter a French term, choose an option under French. With 50 features you are left with statistical analysis, no actual visual inspection. Note: The language you choose must correspond to the language of the term you have entered. In order to plot 2D/3D decision boundary your data has to be 2D/3D (2 or 3 features, which is exactly what is happening in the link provided - they only have 3 features so they can plot all of them).

The only thing you can do are some rough approximations, reductions and projections, but none of these can actually represent what is happening inside. scatter ( X, X, c = Y, edgecolors = 'k', cmap = plt. Paired ) # Plot also the training points plt. c_ ) # Put the result into a color plot Z = Z. arange ( y_min, y_max, h )) Z = logreg. For that, we will assign a color to each # point in the mesh x. fit ( X, Y ) # Plot the decision boundary. Simply train svm and plot it forcing 'pca' visualization, like here. LogisticRegression ( C = 1e5 ) # we create an instance of Neighbours Classifier and fit the data. You can obviously take a look at some slices (select 3 features, or main components of PCA projections). 02 # step size in the mesh logreg = linear_model. data # we only take the first two features.

# Code source: Gaël Varoquaux # Modified for documentation by Jaques Grobler # License: BSD 3 clause import numpy as np import matplotlib.pyplot as plt from sklearn import linear_model, datasets # import some data to play with iris = datasets.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed